AI can draft a clinical note in seconds, but speed without security is a liability. Standard AI tools lack HIPAA safeguards, exposing patient data to unauthorized access, missing audit trails, and vendor misuse. For truly HIPAA-compliant AI notes, you need a complete security stack: a Business Associate Agreement (BAA) to bind your vendor legally, access controls to enforce who sees what, and audit logs to prove every interaction. One or two layers won’t protect you. This guide breaks down why all three are non‑negotiable, and how they work together to keep AI notes safe, compliant, and defensible.

1. The Business Associate Agreement (BAA): Your Legal Foundation

Under HIPAA, any AI vendor that stores or transmits PHI is a Business Associate, and without a signed Business Associate Agreement, you violate the HIPAA Privacy Rule.

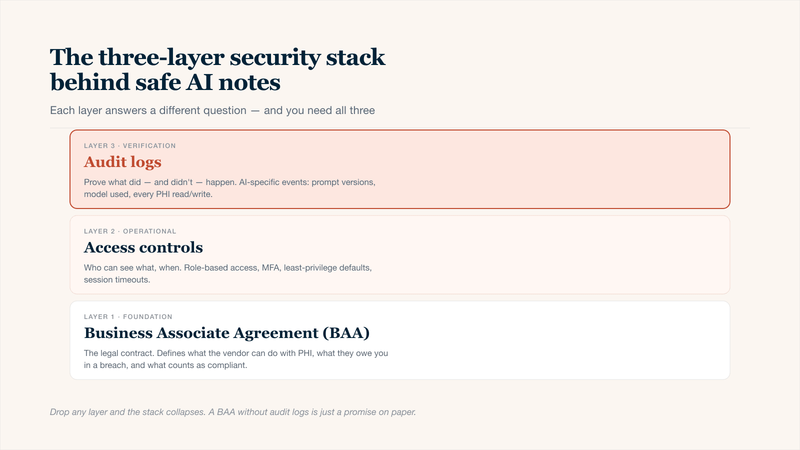

BAA + access controls + audit logs — drop any layer and the stack collapses.

What a Proper AI Vendor BAA Must Include

- Use Restrictions: No training AI models on your clinical notes without explicit opt-in.

- Breach Notification: Maximum 60-day window to notify you.

- Subcontractor Control: Vendor must have BAAs with all sub-processors (OpenAI, AWS, etc.).

Warning Signs to Look Out For in a Vendor's BAA:

- Allows de-identified data for model training without opt-out.

- No liability for subcontractors' compliance failures.

- Lacks specific provisions for AI-generated content retention.

2. Access Controls: Who Sees What, and When

HIPAA's Security Rule requires limiting PHI access to the "minimum necessary." For AI notes, this means controlling access at the user, role, and session level.

Must-Have Access Control Features for AI Notes

- Role-Based Access Control: Physicians see full notes; billers see only codes; researchers see de-identified data.

- Just-in-Time Access: Temporary, auto-revoked approval for sensitive notes.

- Multi-Factor Authentication + SSO: Required for every device generating AI notes.

- Location Restrictions: Block personal phones or foreign IPs.

3. Audit Logs: Proving What Didn't Happen

HIPAA requires audit log retention for 6 years. For AI notes, logs must capture who viewed, edited, exported, and who prompted the AI, and with what text.

AI-Specific Audit Trail Requirements

- Prompt Logging: Exact text (including PHI) sent to the AI model, the most commonly missed log.

- Output Logging: AI's raw response before human editing.

- Download/Print/Forward Events: Often missed but required.

The Complete Security Stack: How the Layers Work Together

You Have | But Missing | Result |

|---|---|---|

BAA Only | Access Controls + Audit Logs | Legal on paper, but no enforcement or proof of who did what. |

Access Controls Only | BAA + Audit Logs | The vendor can still misuse your data. |

Audit Logs Only | BAA + Access Controls | You see the breach happened after it was too late to stop it. |

All three | — | Defensible HIPAA-compliant AI notes. |

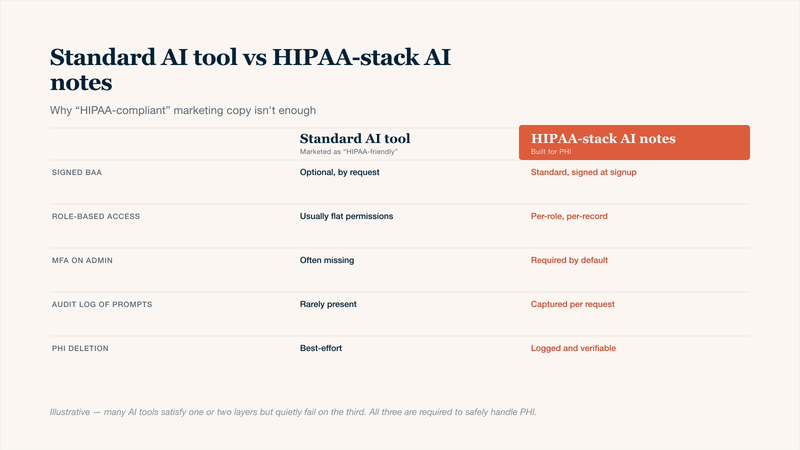

Why Most "HIPAA Compliant AI Notes" Tools Fall Short

Many vendors claim compliance, but here's what they actually miss:

3 Common Vendor Gaps:

- Offer A BAA That Allows Model Training: The fine print often permits using "de-identified" notes for AI improvement, a loophole most clinicians miss.

- Have Audit Logs But Don't Log AI Prompts: You'll see that a note was generated, but not what text was sent to the model, making breach investigations impossible.

- Use RBAC, But Share Tenant Databases: Different customers' data sits in the same database. One misconfigured query can leak PHI across practices.

“HIPAA-friendly” isn't HIPAA-safe — the gap shows up across every control on this table.

Conclusion

Relying on just one or two security layers leaves your AI notes exposed. The BAA binds your vendor legally, access controls determine who sees what, and audit logs prove every action. Remove any layer, and the stack collapses. Many tools claim "HIPAA compliance," but few deliver all three with prompt logging, model training prohibitions, and role‑based access. Before committing, verify each layer. A signed BAA means nothing if audit logs are missing or access controls are weak. For safe, defensible HIPAA‑compliant AI notes, all three must work as one.