Group therapy can be a bit chaotic. For clinicians, tracking who said what during a session typically requires scribbling down what you can from memory, often missing the one quiet patient or misattributing a breakthrough quote. Multi‑speaker AI diarization solves this by automatically identifying and labeling each speaker in real time. This technology attributes every intervention to the correct individual. The result is faster, more accurate AI therapy notes that preserve group dynamics without burning out the therapist. Here's how this innovation is revolutionizing group therapy documentation.

What Is Multi-Speaker AI Diarization?

Multi-speaker AI diarization is the process of partitioning an audio stream into homogeneous segments according to speaker identity. It essentially answers the question of "Who spoke when?"

Unlike standard transcription, which produces a block of text, diarization labels each spoken line with a unique speaker identifier. This turns a confusing monologue of overlapping voices into a clean, readable script.

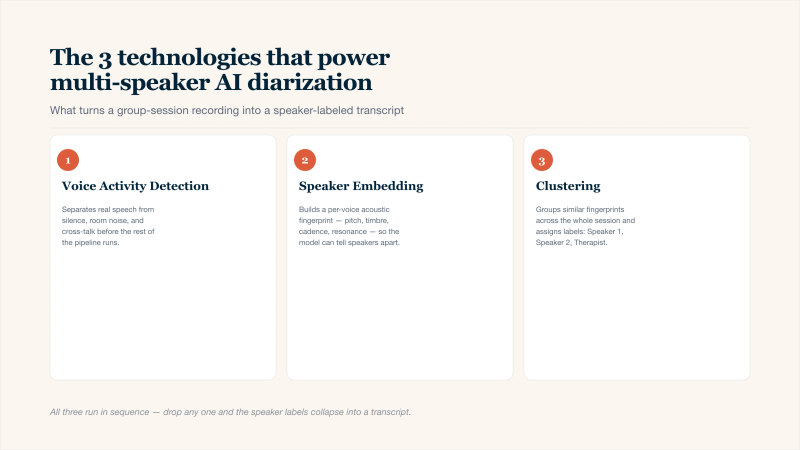

The three audio-AI building blocks that turn a noisy group session into a speaker-labeled transcript.

How It Differs From Standard Transcription:

Feature | Standard Transcription | Diarized Transcription |

|---|---|---|

Output | "I feel anxious. I feel angry too." | Speaker A: "I feel anxious.". Speaker B: "I feel angry too." |

Speaker tracking | None | Individual voice fingerprints |

Clinical utility | Low (cannot attribute quotes) | High (track patient contributions) |

3 Main Technologies:

- Voice Activity Detection (VAD): VAD scans the audio to distinguish speech from silence, background noise, or cross-talk.

- Speaker Embedding: This is like creating a unique "voice fingerprint." The AI extracts acoustic features: pitch, timbre, cadence, and resonance, and converts them into a numerical vector. When Speaker A talks, their vector looks consistently different from Speaker B's.

- Clustering: After the session ends, the AI groups all similar voice fingerprints together. It then assigns labels (Speaker 1, Speaker 2, Therapist) to each cluster. The result is a complete, speaker-labeled transcript ready for clinical review.

Why Group Notes Are a Struggle

Unlike individual sessions where one voice dominates, group therapy involves rapid‑fire exchanges and interruptions. Capturing this accurately can be stressful.

- The "Who Said What" Paradox: Therapists face an impossible choice during group sessions. They can either:

- Focus on facilitation (maintaining eye contact, reading body language, managing turn-taking) and risk forgetting specific quotes later.

- Focus on documentation (scribbling "CY said X, then MG replied Y") and miss the live non-verbal cues that inform clinical judgment.

- Most therapists try to do both. The result is fragmented attention and incomplete notes.

Specific Issues to Note

- Delayed Documentation: Notes written hours after the session rely on memory, which declines rapidly for speaker attribution.

- Bias Toward Verbal Patients: Therapists remember and record quotes from talkative group members while quieter participants fade from recall.

- Lost Cross-talk Dynamics: When two patients speak simultaneously or interrupt, manual notes capture only the louder or finishing speaker.

- Supervision Gaps: Trainees cannot easily review "who challenged whom" without an accurate transcript.

How AI Diarization Specifically Enhances Group Therapy Notes

See how AI notes for therapists are beneficial to the session.

4 Key Clinical Improvements

Beyond simple time savings, multi‑speaker AI diarization unlocks clinical insights that were previously difficult to capture without a dedicated observer in the room.

1. Cross-Talk Capture

Standard transcription collapses overlapping speech into gibberish. Advanced diarization models can isolate and attribute two people speaking simultaneously. For example: Speaker A: "I think you're wrong..." overlapped with Speaker B: "Let me finish..." becomes two separate, readable lines. This preserves the emotional aspect of conflict.

2. Longitudinal Comparison

Last week's "Speaker A" (Patient John) can be compared to this week's "Speaker A" across dozens of sessions. The AI tracks changes in speaking frequency and average response latency. Did John speak less after his medication adjustment? Did Mary interrupt more following a trigger event? These trend lines are incredible for treatment planning.

3. Intervention Timing

The AI timestamps every utterance. A therapist can ask: "When I used the cognitive reframing technique at 14:32, which patient responded first?" Or, "Did my empathic statement at 22:10 reduce cross‑talk or increase it?" This turns therapy from an art into an evidence‑based, self‑correcting practice.

Operational Wins for Practices

While clinicians care about clinical outcomes, practice owners care about sustainability. AI diarization delivers on both fronts.

Operational Wins:

- Reduced After-Hours Documentation: The average therapist spends 5 hours per week on notes outside of paid clinical time. AI diarization cuts that time for you to reinvest into patient care or clinician wellness.

- Objective Data for Supervision and Training: New therapists often struggle to recall group dynamics accurately during supervision. With a diarized transcript, the supervisor can jump directly to "Speaker A's third intervention" or "the moment when conflict escalated." This accelerates learning and provides measurable benchmarks for competency.

- Risk Management and Legal protection: In the event of a patient complaint or board review, a verbatim, speaker-attributed record of exactly what was said (and by whom) is far more defensible than a therapist's paraphrased summary written hours later.

Critical Considerations & Limitations

Clinicians must adopt AI therapy notes with open eyes to its risks and boundaries.

The Danger of Training AI on Actual Patient Voices Without Consent:

Hidden in many free transcription tools is a clause: "We may use your data to improve our models." If an AI vendor trains on your group therapy audio, patient voices become part of a commercial product. This is certainly a HIPAA violation and an ethical breach.

- Required Action: Before using any tool, demand that all data is deleted after processing (or stored only per your retention policy).

Accuracy Nuances

Where it Fails:

- Severe Voice Dysphoria Or Voice Disorders: Conditions that alter vocal tract resonance (e.g., spasmodic dysphonia, severe laryngitis) can break speaker embedding. The AI may misclassify the same person as two different speakers, session-to-session.

- Identical Twins (or Very Similar Voices): Diarization relies on acoustic differences. If two voices are nearly identical in pitch, timbre, and cadence, the AI will struggle. It may merge them into one speaker or randomly split them.

- Heavy Accents Or Non-Dominant Language: Models trained primarily on standard American or British English have higher error rates with strong regional accents or when patients switch between languages mid-sentence (code-switching).

- Poor Microphone Placement: A single omni-directional microphone in the center of a circle works reasonably well. But if the room is echoey, if patients sit far apart, or if someone whispers, diarization accuracy drops noticeably.

Note: Always review the diarization output.

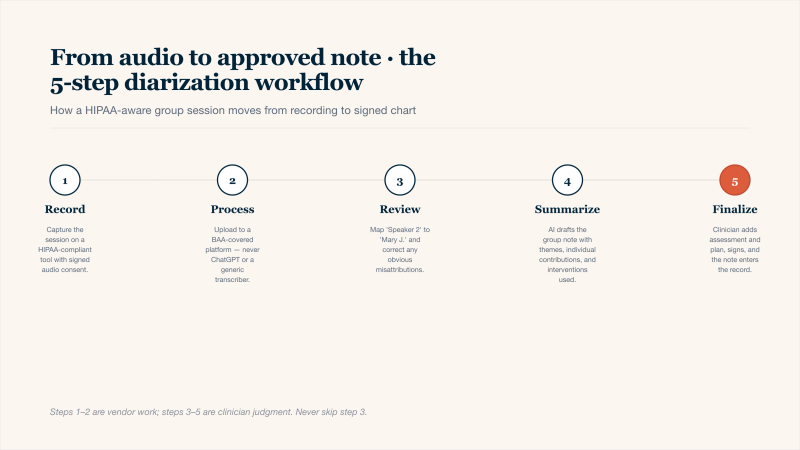

Implementation Workflow: From Audio to Approved Notes

- Record: Use a HIPAA-compliant AI therapy note tool. Obtain signed consent specifically for AI-processed audio recording and record in a quiet room.

- Process: Upload to a platform with a signed BAA (HIPAA. Never use generic tools like ChatGPT.

- Review: AI outputs a labeled transcript. The therapist maps speakers to real names (e.g., "Speaker 2" → "Mary J.") and deletes obvious misattributions.

- Summarize: AI generates a draft note including group themes, individual contributions, and interventions used. The draft must be clearly marked "AI-Generated – Requires Review."

- Finalize: Clinician adds clinical judgment, assessment, and treatment plan. After full review and signature, the note enters the medical record and audio/ transcript are then deleted.

Five steps from a group-session recording to a signed, speaker-attributed note — clinician judgment stays in the loop.

Conclusion

By accurately answering "who said what," multi‑speaker AI diarization frees clinicians from scribbling and fragmented memory. Therapists can now be truly present in the room, with objective data that reveals silent or dominant patients, and documentation time is cut. Privacy risks require vigilance, and clinical judgment remains irreplaceable. However, for group practices willing to implement responsibly, AI therapy notes with diarization offer something rare: better documentation and better patient care, simultaneously.