Before building Twofold, we spent months talking to solo practitioners and small practices about their documentation tools. The vast majority had the same complaint: hospital‑grade AI scribes didn't work for their practice.

Dr. Sarah Chen (name changed for privacy) runs a three‑physician family medicine practice in Portland. Last year, she spent $15,000 and three months implementing an AI documentation tool that worked beautifully at the hospital system down the street.

It failed in six weeks.

Not because the technology was bad. Because it was built for an entirely different universe.

Small practices aren't baby hospitals. They're fundamentally different organizations with different workflows, economics, and needs.

This article breaks down exactly why hospital-grade AI fails in small practices, and what design philosophy actually works for physicians who wear five hats.

The Structural Gap

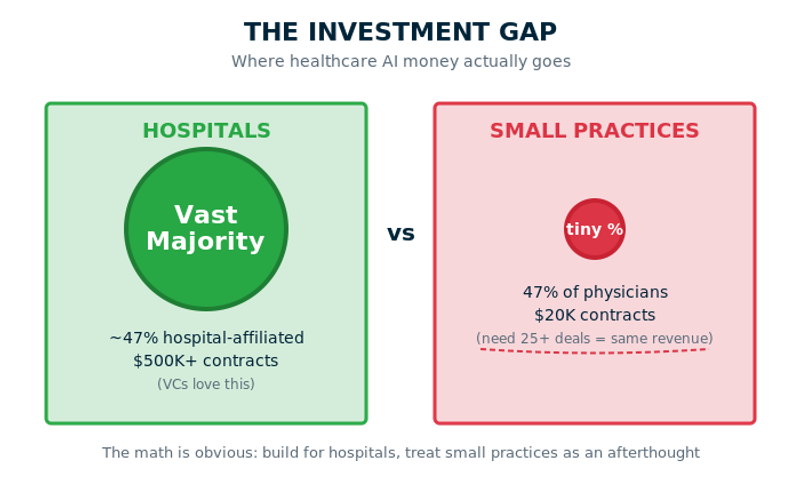

There are 400,000+ physicians in US practices with 10 or fewer doctors. According to the 2024 AMA Physician Practice Benchmark Survey, that's 47% of all practicing physicians—nearly half. Yet healthcare AI investment overwhelmingly targets large health systems.

The gap isn't accidental. It's economic.

A single hospital contract can generate $500K+ in annual recurring revenue. The same revenue from small practices requires 25+ separate deals. From a VC perspective, the math is obvious: build for hospitals, treat small practices as an afterthought.

The result? Doctors like Sarah get tools designed for 200‑physician hospital departments, trying to make them work in 3‑person clinics where everyone wears five hats.

Why Hospital AI Generates 287-Word Notes

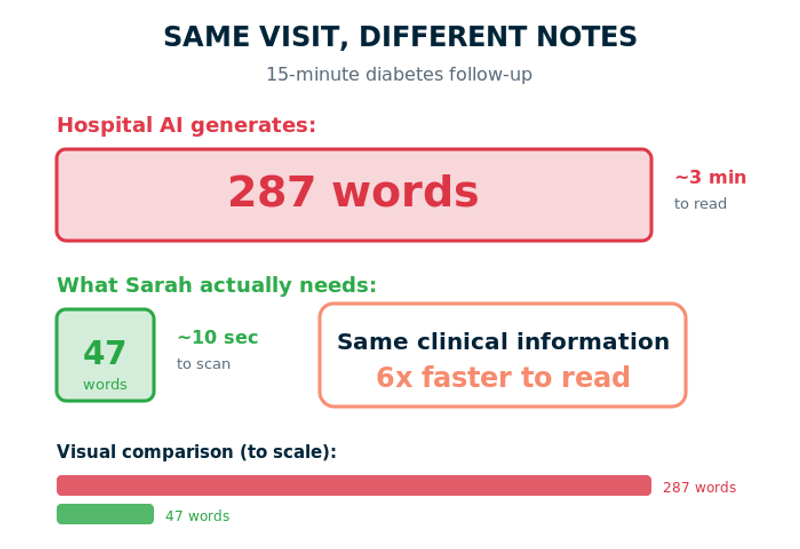

Here's what happened when Sarah's practice tried hospital‑grade AI for a routine 15‑minute diabetes follow‑up:

HISTORY OF PRESENT ILLNESS: Patient is a 54-year-old male with a past medical history significant for type 2 diabetes mellitus, hypertension, hyperlipidemia, and obesity who presents today for routine diabetes management follow-up. Patient reports good compliance with metformin 1000mg twice daily and reports checking blood sugars at home which have been ranging 110-140 in the morning. Denies any episodes of hypoglycemia. Reports mild tingling in feet bilaterally but denies any other complications... [287 words total]

Why so verbose? Because most training data comes from large health systems and teaching hospitals. These notes serve multiple audiences: the endocrinologist who sees the patient next month, the care coordinator scheduling labs, the quality metrics team, the billing specialist, possibly medical students.

The AI learned that more detail = better documentation. It optimized for comprehensive handoffs and billing code justification, not clinical efficiency.

But Sarah's practice? She's all of those people. She doesn't need context explained because she was in the room. She needs something scannable in 10 seconds:

HPI: DM2 follow-up. Metformin 1000mg BID. Home glucose 110-140 fasting. Mild peripheral neuropathy, stable. Assessment: A1C 7.2, improved from 7.8. Continue current regimen. [47 words]

Same clinical information. Six times faster to read. This isn't about "summarization." It's fundamentally different training data and use cases.

Workflow: 10 People vs. 3

Let me show you what a patient encounter looks like in each environment.

Three people do the work of ten. The physician isn't just diagnosing. They're managing the entire patient relationship.

Hospital AI scribes break down because they assume specialization and handoffs. A physician assistant told us: "Support kept asking me to 'check with my systems administrator.' I AM the systems administrator. I'm also the doctor, the billing person, and the one who orders supplies."

Hospital vs Small Practice Tool Onboarding: Reality Check

Hospital Implementation | Small Practice Reality |

|---|---|

6-month rollout | Tuesday afternoon between patients |

Dedicated implementation team | Office manager (also does HR, billing) |

IT department on call | Doctor fixes it herself |

4-hour protected training | YouTube video |

Staged deployment | Must work in 30 minutes |

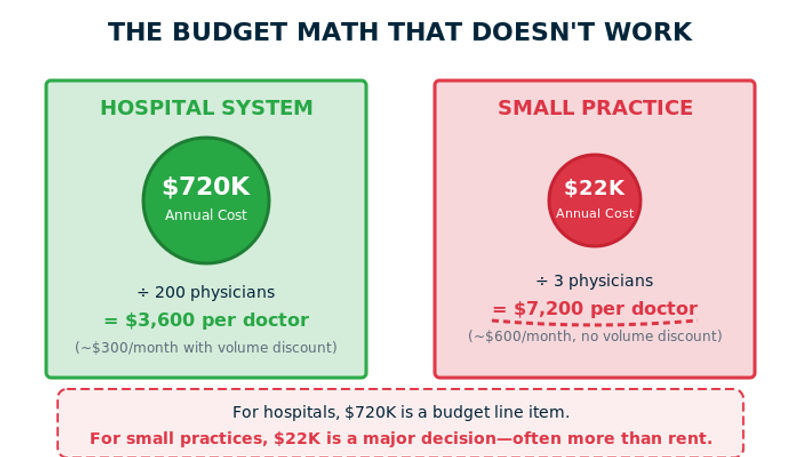

The Budget Trap

I watched a hospital debate a $300K AI implementation for 45 minutes. The CFO's concern: ROI in 12 or 18 months?

Next day, a solo practitioner spent three weeks agonizing over $200/month. She'd just paid malpractice insurance ($15K), rent increased ($400/month), and reimbursement rates hadn't moved in five years.

"Operational costs keep rising (rent, wages, supplies) but insurance reimbursement rates are frozen. Every $200 monthly subscription is a real decision."

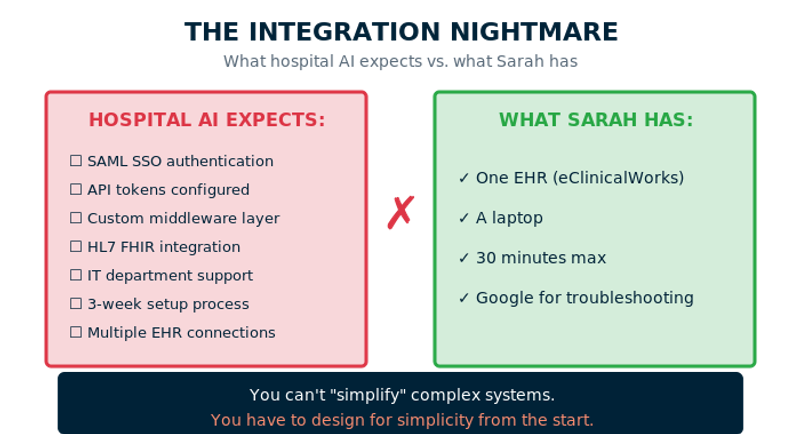

The Integration Nightmare

Most AI scribe companies build for hospitals first, which means their setup flows include SAML SSO, API tokens, custom middleware, and HL7 configuration.

Sarah doesn't have any of that. She needs something that works with one EHR, period. No middleware, no enterprise authentication, no three‑week setup process.

The trap: vendors build for hospital complexity, then try to "simplify" for small practices. But you can't simplify complex systems. You have to design for simplicity from the start.

The Customization Paradox

Hospitals lean heavily toward standardization for compliance, quality metrics, and large‑team coordination.

Small practices need personalization: bullet points for the pediatrician, narrative notes for the family medicine doc, procedure‑focused documentation for the orthopedist. This isn't inconsistency to fix. It's how doctors think.

"The AI notes don't sound like me. I spent 20 years developing my clinical voice. These read like someone learned therapy from a textbook."

"My notes are fairly short and the AI-generated ones have a lot of fluff. I copy and paste 70% anyway."

Hospital‑grade AI optimizes for compliance and uniformity. Small practice AI must optimize for personal clinical style while meeting requirements. That's not a feature request. It's a different product.

Why Small Practices Are Overlooked in Healthcare AI Investment

Why VCs Fund Hospital-Focused AI | Why They Avoid Small Practices |

|---|---|

One enterprise sale = $500K+ ARR | Same sales effort, fraction of the revenue |

"Deployed across 200 physicians at Major Medical Center" sounds impressive | "200 individual practitioners" sounds fragmented |

Clear exit opportunities through health system acquisitions | Fragmented market, lower contract values |

Result: Nearly half of US physicians work in small practices, but the vast majority of AI investment targets large health systems.

Building for Constraints

After hundreds of conversations, we realized the solution isn't "hospital tools, but simpler." It's building specifically for small practice constraints:

When we built Twofold, we only talked to solo practitioners and small practices. We learned they don't want transcripts of everything said, don't need 17 EHR integrations, don't have time for implementation consultants. They need notes matching how they actually think and write.

What's at Stake

Dr. Martinez (name changed for privacy), solo internal medicine:

Scenario | Result |

|---|---|

Before AI | 2.5 hours nightly documentation, considering reducing patient load |

Hospital AI trial | Notes 3x longer than her style, 15-20 min editing each. "Like having an intern who writes everything down but doesn't know what matters." Canceled after 2 months. |

With Twofold | Notes match her style, ready before next patient, finishes by 6 PM, takes on 3 more patients per day |

The difference isn't features. It's design philosophy.

The Real Question

Small practices aren't baby hospitals. They're fundamentally different organizations with different workflows, economics, and needs.

The physicians most likely to know their patients deeply, provide genuine continuity of care, and practice medicine the way they believe it should be practiced? They're working in small practices. And they're being squeezed out by tech inequality.

The question isn't whether small practices deserve better tools. It's whether anyone will build them when the VC math points elsewhere.

Because right now, nearly half of all doctors are watching innovation happen to everyone but them.